While great new benefits are widely expected, most experts also express some degree of worry over how today’s digital trends may influence life in the near future. More than a third, 37%, are more concerned than excited, and 42% are equally concerned and excited about what they expect to see as they imagine the next decade-plus

The content for this report was gathered in a canvassing of experts conducted in early 2023 by Elon University’s Imagining the Internet Center and Pew Research Center. They were asked to consider today’s trends and predict the best and worst of digital change by 2035 in the realms of human-centered development of digital tools and systems; human connections, governance and institutions; human rights; human knowledge; and human health and well-being.

The content for this report was gathered in a canvassing of experts conducted in early 2023 by Elon University’s Imagining the Internet Center and Pew Research Center. They were asked to consider today’s trends and predict the best and worst of digital change by 2035 in the realms of human-centered development of digital tools and systems; human connections, governance and institutions; human rights; human knowledge; and human health and well-being.

Results released June 21, 2023 – Elon University’s Imagining the Internet Center and Pew Research Center invited thousands of experts to share their opinions and predictions about the evolution and impact of digital advances by 2035. More than 300 technology innovators, developers, business and policy leaders, researchers and activists responded to this canvassing, which took place between December 27, 2022, and February 21, 2023. Several hundred wrote explanatory comments in response to the study’s questions. This report analyzes and organizes more than 200 pages of expert responses and reveals the most-prevalent themes. It includes thousands of intelligent insights about the likely future based on today’s trends.

This page carries the full 232-page report in one long scroll; you can also download a PDF by clicking on the related graphic. If you wish, you can select a link below to read only the expert responses, with no sort or analysis:

– Read only the for-credit experts’ views on the Best and Worst of Digital Life 2035

– Read only the anonymous experts’ views on the Best and Worst of Digital Life 2035

– Download or read the print version of the report

This main report web page contains: 1) the research question; 2) a very brief outline of the most common themes found among these experts’ remarks; 3) the full report with analysis, which weaves together the experts’ written submissions in chapter form; 4) a detailed methodology section, which is located after the final chapter. The research question follows.

The Prompt – The best and worst of digital life in 2035: We seek your insights about the future impact of digital change. This survey contains three substantive questions about that. The first two are open-ended questions. The third asks how you feel about the future you see.

The Prompt – The best and worst of digital life in 2035: We seek your insights about the future impact of digital change. This survey contains three substantive questions about that. The first two are open-ended questions. The third asks how you feel about the future you see.

The first open-ended question: As you look ahead to 2035, what are the BEST AND MOST BENEFICIAL changes that are likely to occur by then in digital technology and humans’ use of digital systems? We are particularly interested in your thoughts about how developments in digital technology and humans’ uses of it might improve human-centered development of digital tools and systems; human connections, governance and institutions; human rights; human knowledge; and human health and well-being.

The second open-ended question: As you look ahead to the year 2035, what are the MOST HARMFUL OR MENACING changes that are likely to occur by then in digital technology and humans’ use of digital systems? We are particularly interested in your thoughts about how developments in digital technology and humans’ uses of it are likely to be detrimental to human-centered development of digital tools and systems; human connections, governance and institutions; human rights; human knowledge; and human health and well-being.

The third and final question: On balance, how would you say that the developments you foresee in digital technology and uses of it by 2035 make you feel? (Choose one option.)

- More excited than concerned

- More concerned than excited

- Equally excited and concerned

- Neither excited nor concerned

- I don’t think there will be much real change

Results for third question – regarding the respondents’ general mood in regard to the changes they foresee by 2035:

- 42% of these experts said they are equally excited and concerned about the changes in humans-plus-tech evolution they expect to see by 2035

- 37% said they are more concerned than excited about the change they expect

- 18% said they are more excited than concerned about expected change by 2035

- 2% said they are neither excited nor concerned

- 2% said they don’t think there will be much real change by 2035

Common themes found among the experts’ qualitative responses:

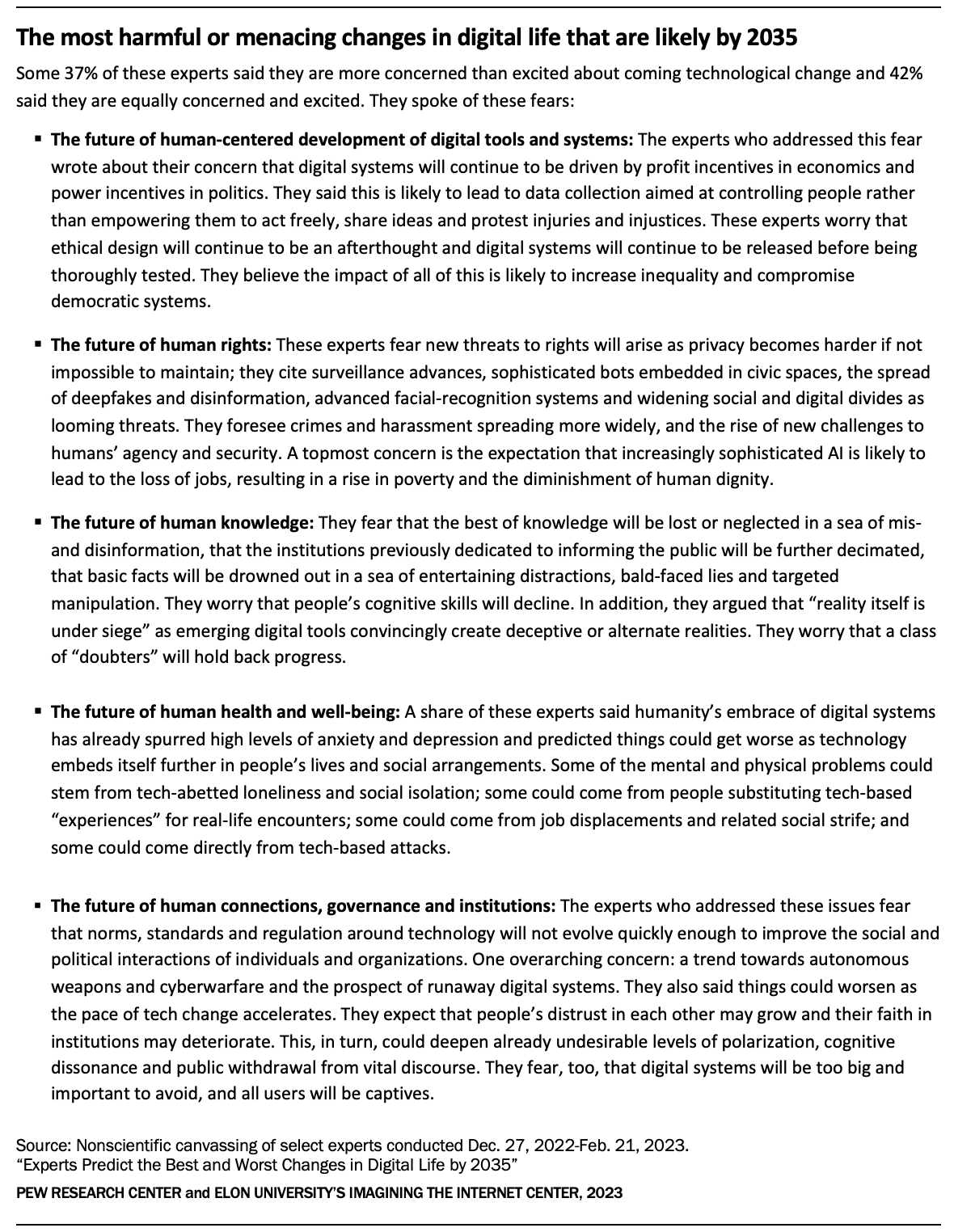

Some 37% of these experts said they are more concerned than excited about coming technological change and 42% said they are equally concerned and excited. They spoke of these fears:

* The future of human-centered development of digital tools and systems: The experts who addressed this fear wrote about their concern that digital systems will continue to be driven by profit incentives in economics and power incentives in politics. They said this is likely to lead to data collection aimed at controlling people rather than empowering them to act freely, share ideas and protest injuries and injustices. These experts worry that ethical design will continue to be an afterthought and digital systems will continue to be released before being thoroughly tested. They believe the impact of all of this is likely to increase inequality and compromise democratic systems

*The future of human rights: These experts fear new threats to rights will arise as privacy becomes harder if not impossible to maintain; they cite surveillance advances, sophisticated bots embedded in civic spaces, the spread of deepfakes and disinformation, advanced facial-recognition systems and widening social and digital divides as looming threats. They foresee crimes and harassment spreading more widely, and the rise of new challenges to humans’ agency and security. A topmost concern is the expectation that increasingly sophisticated AI is likely to lead to the loss of jobs, resulting in a rise in poverty and the diminishment of human dignity.

*The future of human knowledge: They fear that the best of knowledge will be lost or neglected in a sea of mis- and disinformation, that the institutions previously dedicated to informing the public will be further decimated, that basic facts will be drowned out in a sea of entertaining distractions, bald-faced lies and targeted manipulation. They worry that people’s cognitive skills will decline. In addition, they argued that “reality itself is under siege” as emerging digital tools convincingly create deceptive or alternate realities. They worry that a class of “doubters” will hold back progress.

*The future of human health and well-being: A share of these experts said humanity’s embrace of digital systems has already spurred high levels of anxiety and depression and predicted things could get worse as technology embeds itself further in people’s lives and social arrangements. Some of the mental and physical problems could stem from tech-abetted loneliness and social isolation; some could come from people substituting tech-based experiences for real-life encounters; some could come from job displacements and related social strife; and some could come directly from tech-based attacks.

*The future of human connections, governance and institutions: The experts who addressed these issues fear that norms, standards and regulation around technology will not evolve quickly enough to improve the social and political interactions of individuals and organizations. One overarching concern: a trend towards autonomous weapons and cyberwarfare and the prospect of runaway digital systems. They also said things could worsen as the pace of tech change accelerates. They expect that people’s distrust in each other may grow and their faith in institutions may deteriorate. This, in turn, could deepen already undesirable levels of polarization, cognitive dissonance and public withdrawal from vital discourse. They fear, too, that digital systems will be too big and important to avoid, and all users will be captives.

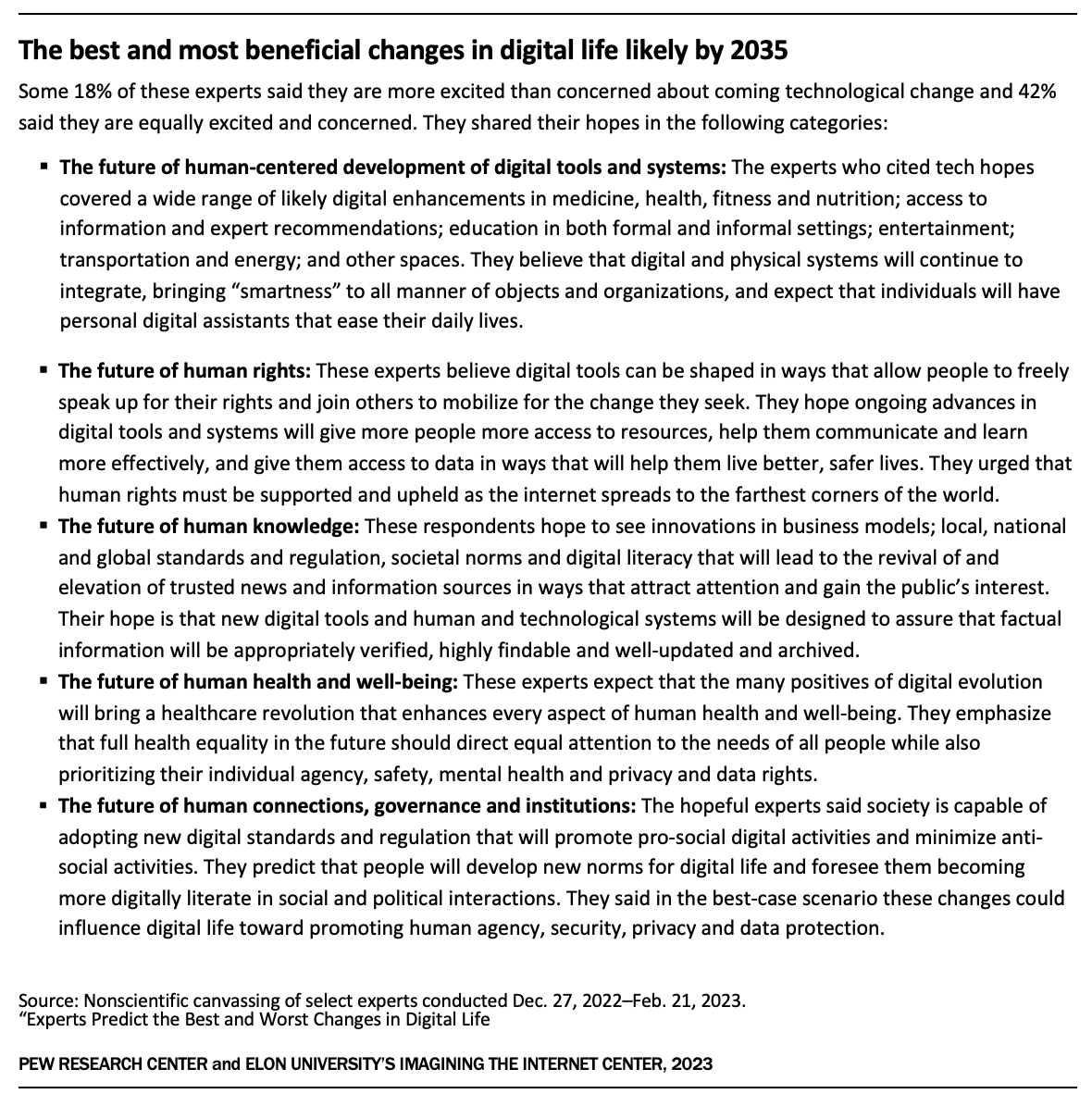

Some 18% of these experts said they are more excited than concerned about coming technological change and 42% said they are equally excited and concerned. They shared their hopes for beneficial change in these categories:

*The future of human-centered development of digital tools and systems: The experts who cited tech hopes covered a wide range of likely digital enhancements in medicine, health, fitness and nutrition; access to information and expert recommendations; education in both formal and informal settings; entertainment; transportation and energy; and other spaces. They believe that digital and physical systems will continue to integrate, bringing “smartness” to all manner of objects and organizations, and expect that individuals will have personal digital assistants that ease their daily lives.

*The future of human rights: These experts believe digital tools can be shaped in ways that allow people to freely speak up for their rights and join others to mobilize for the change they seek. They hope ongoing advances in digital tools and systems will give more people more access to resources, help them communicate and learn more effectively, and give them access to data in ways that will help them live better, safer lives. They urged that human rights must be supported and upheld as the internet spreads to the farthest corners of the world.

*The future of human knowledge: These respondents hope to see innovations in business models; local, national and global standards and regulation, societal norms and digital literacy that will lead to the revival of and elevation of trusted news and information sources in ways that attract attention and gain the public’s interest. Their hope is that new digital tools and human and technological systems will be designed to assure that factual information will be appropriately verified, highly findable and well-updated and archived.

*The future of human health and well-being: These experts expect that the many positives of digital evolution will bring a healthcare revolution that enhances every aspect of human health and well-being. They emphasize that full health equality in the future should direct equal attention to the needs of all people while also prioritizing their individual agency, safety, mental health and privacy and data rights.

*The future of human connections, governance and institutions: The hopeful experts said society is capable of adopting new digital standards and regulation that will promote pro-social digital activities and minimize anti-social activities. They predict that people will develop new norms for digital life and foresee them becoming more digitally literate in social and political interactions. They said in the best-case scenario these changes could influence digital life toward promoting human agency, security, privacy and data protection.

These themes are repeated in shareable graphic images in two tables included below, in the full report.

Full Report with Complete Findings

Experts Predict the Best and Worst of Digital Life By 2035

Most have mixed views about where today’s trends in digital life might lead, many are more fearful than hopeful. While they expect great benefits in healthcare and education, they have deep concerns about potential menaces to people’s and society’s overall well-being

Spurred by the splashy emergence of generative artificial intelligence and an array of other AI applications, experts have great expectations for digital advances across many aspects of life by 2035. They especially anticipate striking improvements in healthcare and education. They foresee a world in which wonder drugs are conceived and enabled in digital spaces, where personalized medical care gives patients precisely what they need when they need it, where people wear smart eyewear and earbuds that keep them connected to the people, things and information around them, where AI systems can nudge discourse into productive and fact-based conversations, where progress will be made in environmental sustainability, climate action and pollution prevention.

Spurred by the splashy emergence of generative artificial intelligence and an array of other AI applications, experts have great expectations for digital advances across many aspects of life by 2035. They especially anticipate striking improvements in healthcare and education. They foresee a world in which wonder drugs are conceived and enabled in digital spaces, where personalized medical care gives patients precisely what they need when they need it, where people wear smart eyewear and earbuds that keep them connected to the people, things and information around them, where AI systems can nudge discourse into productive and fact-based conversations, where progress will be made in environmental sustainability, climate action and pollution prevention.

At the same time, they worry about the darker sides of many of the developments they celebrate, key examples:

- Some expressed fears that align with the statement recently released by technology leaders and AI specialists arguing that AI poses the “risk of extinction” for humans that should be treated with the same urgency as pandemics and nuclear war.

- Some point to clear problems that have been identified with generative AI systems, which produce erroneous and unexplainable things and are already being used to foment misinformation and trick people.

- Some are anxious about the seemingly unstoppable speed and scope of digital tech that they fear could enable blanket surveillance of vast populations and could destroy the information environment, undermining democratic systems with deepfakes, misinformation and harassment.

- They fear massive unemployment, the spread of global crime, and further concentration of global wealth and power in the hands of the founders and leaders of a few large companies.

- They also speak about how the weaponization of social media platforms might create population-level stress, anxiety, depression and feelings of isolation.

In sum, the experts in this canvassing noted that humans’ choices to use technologies for good or ill will change the world significantly.

These predictions emerged from a canvassing of technology innovators, developers, business and policy leaders, researchers and academics by Pew Research Center and Elon University’s Imagining the Internet Center. Some 305 responded to this query:

As you look ahead to the year 2035, what are the BEST AND MOST BENEFICIAL changes that are likely to occur by then in digital technology and humans’ use of digital systems? … What are the MOST HARMFUL OR MENACING changes likely to occur?

Many of these experts wrote long, detailed assessments describing potential opportunities and threats they see to be most likely. The full question prompt specifically encouraged them to share their thoughts about both kinds of impacts – positive and negative. And our question invited them to think about the benefits and costs of five specific domains of life:

- Human-centered development of digital tools and systems

- Human rights

- Human knowledge

- Human health and well-being

- Human connections, governance and institutions

They were also asked to indicate how they feel about the changes they foresee.

- 42% of these experts said they are equally excited and concerned about the changes in humans-plus-tech evolution they expect to see by 2035

- 37% said they are more concerned than excited about the change they expect

- 18% said they are more excited than concerned about expected change by 2035

- 2% said they are neither excited nor concerned

- 2% said they don’t think there will be much real change by 2035

Many of the respondents quite succinctly outlined their expectations for the best and worst in digital change by 2035. Here below are some of those comments. Many of the respondents quite succinctly outlined their expectations for the best and worst in digital change by 2035. Here below are some of those comments. (The remarks made by the respondents to this canvassing reflect their personal positions and are not the positions of their employers. The descriptions of their leadership roles help identify their background and the locus of their expertise. Some responses are lightly edited for style and readability.)

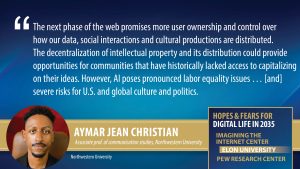

Aymar Jean Christian, associate professor of communication studies at Northwestern University and adviser to the Center for Critical Race Digital Studies, said, “Decentralization is a promising trend in platform distribution. Web 2.0 companies grew powerful by creating centralized platforms and amassing large amounts of social data. The next phase of the web promises more user ownership and control over how our data, social interactions and cultural productions are distributed. The decentralization of intellectual property and its distribution could provide opportunities for communities that have historically lacked access to capitalizing on their ideas. Already users and grassroots organizations are experimenting with new decentralized governance models, innovating in the longstanding hierarchical corporate structure.

Aymar Jean Christian, associate professor of communication studies at Northwestern University and adviser to the Center for Critical Race Digital Studies, said, “Decentralization is a promising trend in platform distribution. Web 2.0 companies grew powerful by creating centralized platforms and amassing large amounts of social data. The next phase of the web promises more user ownership and control over how our data, social interactions and cultural productions are distributed. The decentralization of intellectual property and its distribution could provide opportunities for communities that have historically lacked access to capitalizing on their ideas. Already users and grassroots organizations are experimenting with new decentralized governance models, innovating in the longstanding hierarchical corporate structure.

“However, the automation of story creation and distribution through artificial intelligence poses pronounced labor equality issues as corporations seek cost benefits for creative content and content moderation on platforms. These AI systems have been trained on the un- or under-compensated labor of artists, journalists and everyday people, many of them underpaid labor outsourced by U.S.-based companies. These sources may not be representative of global culture or hold the ideals of equality and justice. Their automation poses severe risks for U.S. and global culture and politics. As the web evolves, there remain big questions as to whether equity is possible or if venture capital and the wealthy will buy up all digital intellectual property. Conglomeration among firms often leads to market manipulation, labor inequality and cultural representations that do not reflect changing demographics and attitudes. And there are also climate implications for many new technological developments, particularly concerning the use of energy and other material natural resources.”

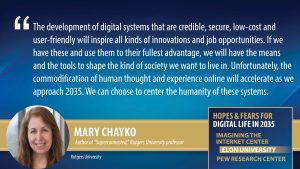

Mary Chayko, sociologist, author of “Superconnected“ and professor of communication and information at Rutgers University, wrote, “As communication technology advances into 2035 it will allow people to learn from one another in ever more diverse, multifaceted, widely distributed social networks. We will be able to grow healthier, happier, more knowledgeable and more connected as we create and traverse these networked pathways together. The development of digital systems that are credible, secure, low-cost and user-friendly will inspire all kinds of innovations and job opportunities. If we have these types of networks and use them to their fullest advantage, we will have the means and the tools to shape the kind of society we want to live in.

Mary Chayko, sociologist, author of “Superconnected“ and professor of communication and information at Rutgers University, wrote, “As communication technology advances into 2035 it will allow people to learn from one another in ever more diverse, multifaceted, widely distributed social networks. We will be able to grow healthier, happier, more knowledgeable and more connected as we create and traverse these networked pathways together. The development of digital systems that are credible, secure, low-cost and user-friendly will inspire all kinds of innovations and job opportunities. If we have these types of networks and use them to their fullest advantage, we will have the means and the tools to shape the kind of society we want to live in.

“Unfortunately, the commodification of human thought and experience online will accelerate as we approach 2035. Technology is already used not only to harvest, appropriate and sell our data, but to manufacture and market data that simulates the human experience, as with applications of artificial intelligence. This has the potential to degrade and diminish the specialness of being human, even as it makes some humans very rich. The extent and verisimilitude of these practices will certainly increase as technology permits the replication of human thought and likeness in ever more realistic ways. But it is human beings who design, develop, unleash, interpret and use these technological tools and systems. We can choose to center the humanity of these systems and to support those who do so, and we must.”

Sean McGregor, founder of the Responsible AI Collaborative, said, “By 2035, technology will have developed a window into many inequities of life, thereby empowering individuals to advocate for greater access to and authority over decision-making currently entrusted to people with inscrutable agendas and biases. The power of the individual will expand with communication, artistic and educational capacities not known throughout previous human history.

Sean McGregor, founder of the Responsible AI Collaborative, said, “By 2035, technology will have developed a window into many inequities of life, thereby empowering individuals to advocate for greater access to and authority over decision-making currently entrusted to people with inscrutable agendas and biases. The power of the individual will expand with communication, artistic and educational capacities not known throughout previous human history.

“However, if trends remain as they are now, people, organizations and governments interested in accumulating power and wealth over the broader public interest will apply these technologies toward increasingly repressive and extractive aims.

“It is vital that there be a concerted, coordinated and calm effort to globally empower humans in the governance of artificial intelligence systems. This is required to avoid the worst possibilities of complex socio-technical systems. At present, we are woefully unprepared and show no signs of beginning collaborative efforts of the scale required to sufficiently address the problem.”

Laurie L. Putnam, educator and communications consultant, wrote, “There is great potential for digital technologies to improve health and medical care. Out of necessity, digital healthcare will become a norm. Remote diagnostics and monitoring will be especially valuable for aging and rural populations that find it difficult to travel. Connected technologies will make it easier for specialized medical personnel to work together from across the country and around the world. Medical researchers will benefit from advances in digital data, tools and connections, collaborating in ways never before possible. However, many digital technologies are taking more than they give. And what we are giving up is difficult, if not impossible, to get back.

“But today’s digital spaces, populated by the personal data of people in the real world, is lightly regulated and freely exploited. Technologies like generative AI and cryptocurrency are costing us more in raw energy than they are returning in human benefit. Our digital lives are generating profit and power for people at the top of the pyramid without careful consideration of the shadows they cast below, shadows that could darken our collective future.

“If we want to see different outcomes in the coming years, we will need to rethink our ROI [return on investment] calculations and apply broader, longer-term definitions of ‘return.’ We are beginning to see more companies heading in this direction, led by people who aren’t prepared to sacrifice entire societies for shareholders’ profits, but these are not yet the most-powerful forces. Power must shift and priorities must change.”

David Clark, Internet Hall of Fame member and senior research scientist at MIT’s Computer Science and Artificial Intelligence Laboratory, proposed improvements, writing, “To have an optimistic view of the future you must imagine that several potential positives will come to fruition to overcome big issues:

David Clark, Internet Hall of Fame member and senior research scientist at MIT’s Computer Science and Artificial Intelligence Laboratory, proposed improvements, writing, “To have an optimistic view of the future you must imagine that several potential positives will come to fruition to overcome big issues:

- “The currently rapid rate of change slows, helping us to catch up.

- The Internet becomes much more accessible and inclusive, and the numbers of the unserved or poorly served become a much smaller fraction of the population.

- Over the next 10 years the character of critical applications such as social media mature and stabilize, and users become more sophisticated about navigating the risks and negatives.

- Increasing digital literacy helps all users to better avoid the worst perils of the Internet experience.

- A new generation of social media emerges, with less focus on user profiling to sell ads, less emphasis on unrestrained virality and more of a focus on user-driven exploration and interconnection.

- And the best thing that could happen is that application providers move away from the advertising-based revenue model and establish an expectation that users actually pay. This would remove many of the distorting incentives that plague the ‘free’ Internet experience today. Consumers today already pay for content (movies, sports and games, in-game purchases and the like). It is not necessary that the troublesome advertising-based financial model should dominate.”

The main themes cited among these 305 respondents’ hopes for the best and most beneficial change between 2023 and 2035 are outlined in the graphic below:

Here is a small selection of responses touching on many aspects of the “harmful” themes:

Herb Lin, senior research scholar for cyber policy and security at Stanford University’s Center for International Security and Cooperation, said, “My best hope is that human wisdom and willingness to act will not lag so much that they are unable to respond effectively to the worst of the new challenges accompanying innovation in digital life.

“The worst likely outcome is that humans will develop too much trust and faith in the utility of the applications of digital life and become ever more confused between what they want and what they need. The result will be that societal actors with greater power than others will use the new applications to increase these power differentials for their own advantage. The most beneficial change in digital life might simply be that things don’t get much worse than they are now with respect to pollution in and corruption of the information environment. Applications such as ChatGPT will get better without question, but the ability of humans to use such applications wisely will lag.”

Erhardt Graeff, a researcher at Olin College of Engineering who is expert in the design and use of technology for civic and political engagement, said, “I worry that humanity will largely accept the hyper-individualism and social and moral distance made possible by digital technology and assume that this is how society should function. I worry that our social and political divisions will grow wider if we continue to invest ourselves personally and institutionally in the false efficiencies and false democracies of Twitter-like social media.”

Ayden Férdeline, Landecker Democracy Fellow at Humanity in Action, wrote, “There are organizations today that profit from being perceived as ‘merchants of truth.’ The judicial system is based on the idea that the truth can be established through an impartial and fair hearing of evidence and arguments. Historically, we have trusted those actors and their expertise in verifying information.

Ayden Férdeline, Landecker Democracy Fellow at Humanity in Action, wrote, “There are organizations today that profit from being perceived as ‘merchants of truth.’ The judicial system is based on the idea that the truth can be established through an impartial and fair hearing of evidence and arguments. Historically, we have trusted those actors and their expertise in verifying information.

“As we transition to building trust into digital media files through techniques like authentication-at-source and blockchain ledgers that provide an audit trail of how a file has been altered over time, there may be attempts to use regulation to limit how we can cryptographically establish the authenticity and provenance of digital media.

“More online regulation is inevitable given the importance of the Internet economically and socially and the likelihood that digital media will increasingly be used as evidence in legal proceedings. But will we get the regulation right? Will we regulate digital media in a way that builds trust, or will we create convoluted, expensive authentication techniques that increase the cost of justice?”

A computer and data scientist at a major U.S. university whose work involves artificial neural networks predicted, “The following potential harmful outcomes are possible if trendlines continue as they have been to this point:

- We accidentally incentivize powerful general-purpose AI systems to seek resources and influence without first making sufficient progress on alignment, eventually leading to the permanent disempowerment of human institutions.

- Short of that, misuse of similarly powerful general-purpose technologies leads to extremely effective political surveillance and substantially improved political persuasion, allowing wealthy totalitarian states to end any meaningful internal pressure toward change.

- The continued automation of software engineering leads large capital-rich tech companies to take on an even more extreme ratio of money and power to number of employees, making it easier for them to move across borders and making it even harder to meaningfully regulate them.”

Henning Schulzrinne, Internet Hall of Fame member and co-chair of the Internet Technical Committee of the IEEE, warned, “The concentration of ad revenue and the lack of a viable alternative source of income will further diminish the reach and capabilities of local news media in many countries, degrading the information ecosystem. This will increase polarization, facilitate government corruption and reduce citizen engagement.”

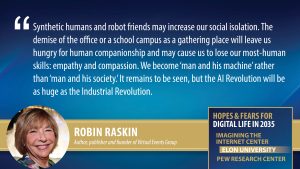

Robin Raskin, author, publisher and founder of the Virtual Events Group, said, “Synthetic humans and robot friends may increase our social isolation. The demise of the office or a school campus as a gathering place will leave us hungry for human companionship and may cause us to lose our most-human skills: empathy and compassion. We become ‘man and his machine’ rather than ‘man and his society.’

Robin Raskin, author, publisher and founder of the Virtual Events Group, said, “Synthetic humans and robot friends may increase our social isolation. The demise of the office or a school campus as a gathering place will leave us hungry for human companionship and may cause us to lose our most-human skills: empathy and compassion. We become ‘man and his machine’ rather than ‘man and his society.’

“The consumerization of AI will augment, if not replace, most white-collar jobs, including in traditional office work, advertising and marketing, writing and programming. Since work won’t be ‘a thing’ anymore we’ll need to find some means of compensation for our contribution to humanity. How much we contribute to the web? A Universal Basic Income because we were the ones who taught AI to do our jobs? It remains to be seen, but the AI Revolution will be as huge as the Industrial Revolution.

“Higher education will face a crisis like never before. Exorbitant pricing and lack of parity with the real world makes college seem quite antiquated. I’m wagering that 50%of higher education in the United States will be forced to close down. We will devise other systems of degrees and badges to prove competency.

“The most critical metaverse will be a digital twin of everything – cities, schools and factories, for example. These twins coupled with IoT devices will make it possible to create simulations, inferences and prototypes for knowing how to optimize for efficiency before ever building a single thing.”

Jim Fenton, a longtime leader in the Internet Engineering Task Force who has worked over the past 35 years at Altmode Networks, Neustar and Cisco Systems, said, “I am particularly concerned about the increasing surveillance associated with digital content and tools. Unfortunately, there seems to be a counter-incentive for governments to legislate for privacy, since they are often either the ones doing the surveilling or they consume the information collected by others. As the public realizes more and more about the ways they are watched, it is likely to affect their behavior and mental state.”

The longtime director of research for a global futures project wrote, “Human rights will become an oxymoron. Censorship, social credit and around-the-clock surveillance will become ubiquitous worldwide; there is nowhere to hide from global dictatorship. Human governance will fall into the hands of a few unelected dictators. Human knowledge will wane and there will be a growing idiocracy due to the public’s digital brainwashing and the snowballing of unreliable, misleading, false information. Science will be hijacked and only serve the interests of the dictator class. In this setting, human health and well-being is reserved for the privileged few; for the majority, it is completely unconsidered. Implanted chips constantly track the health of the general public, and when they become a social burden their lives are terminated.”

Several main themes also emerged among these experts’ expectations for the best and most beneficial change in digital life between 2023 and 2035. They are cited in this table:

Here is a small selection of responses that touch on those themes:

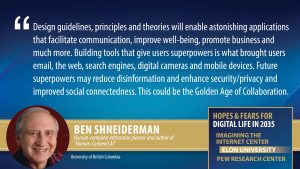

Ben Shneiderman, widely-respected human-computer interaction pioneer and author of “Human-Centered AI,” said, “A human-centered approach to technology development is driven by deep understanding of human needs, which leads to design-thinking strategies that bring successful products and services. Human-centered user interface design guidelines, principles and theories will enable future designers to create astonishing applications that facilitate communication, improve well-being, promote business activities and much more.

Ben Shneiderman, widely-respected human-computer interaction pioneer and author of “Human-Centered AI,” said, “A human-centered approach to technology development is driven by deep understanding of human needs, which leads to design-thinking strategies that bring successful products and services. Human-centered user interface design guidelines, principles and theories will enable future designers to create astonishing applications that facilitate communication, improve well-being, promote business activities and much more.

“Building tools that give users superpowers is what brought users email, the web, search engines, digital cameras and mobile devices. Future superpowers could enable reduction of disinformation, greater security/privacy and improved social connectedness. This could be the Golden Age of Collaboration, with remarkable global projects such as developing COVID-19 vaccine in 42 days.

“The future could be made brighter if similar efforts were devoted to fighting climate change, restoring the environment, reducing inequality and supporting the 17 UN Sustainable Development Goals. Equitable and universal access to technology could improve the lives of many, including those users with disabilities. The challenge will be to ensure human control, while increasing the level of automation.”

Rich Salz, principal engineer at Akamai Technologies, predicted, “We will see a proliferation of AI systems to help with medical diagnosis and research. This may cover a wide range of applications, such as: expert systems to detect breast cancer or other X-ray/imaging analysis; protein folding, etc., and discovery of new drugs; better analytics on drug and other testing; limited initial consultation for doing diagnosis at medical visits. Similar improvements will be seen in many other fields, for instance, astronomical data-analysis tools.”

Deanna Zandt, writer, artist and award-winning technologist, said, “I continue to be hopeful that new platforms and tech will find ways around the totalitarian capitalist systems we live in, allowing us to connect with each other on fundamentally human levels. My own first love of the internet was finding out that I wasn’t alone in how I felt or in the things I liked and finding community in those things. Even though many of those protocols and platforms have been coopted in service of profit-making, developers continue to find brilliant paths of opening up human connection in surprising ways. I’m also hopeful the current trend of hypercapitalistic tech driving people back to more fundamental forms of internet communication will continue. Email as a protocol has been around for how long? And it’s still, as much as we complain about its limitations, a main way we connect.”

Jonathan Stray, senior scientist at the Berkeley Center for Human-Compatible AI, studying algorithms that select and rank content, predicted, “Among the developments we’ll see come along well are: Self-driving cars will reduce congestion, carbon emissions and road accidents. Automated drug discovery will revolutionize the use of pharmaceuticals. This will be particularly beneficial where speed or diversity of development is crucial, as in cancer, rare diseases and antibiotic resistance. We will start to see platforms for political news, debate and decision-making that are designed to bring out the best of us, through sophisticated combinations of human and automated moderation. AI assistants will be able to write sophisticated, well-cited research briefs on any topic. Essentially, most people will have access to instant-specialist literature reviews.”

Kay Stanney, CEO and founder of Design Interactive, commented, “Human-centered development of digital tools can profoundly impact the way we work and learn. Specifically, by coupling digital phenotypes (i.e., real-time, moment-by-moment quantification of the individual-level human phenotype, in situ, using data from personal digital devices, in particular smartphones) with digital twins (i.e., digital representation of an intended or actual real-world physical product, system or process), it will be possible to optimize both human and system performance and well-being. Through this symbiosis, interactions between humans and systems can be adapted in real-time to ensure the system gets what it needs (e.g., predicted maintenance) and the human can get what it needs (e.g., guided stress-reducing mechanisms), thereby realizing truly transformational gains in the enterprise.”

Juan Carlos Mora Montero, coordinator of post-graduate studies in planning at the Universidad Nacional de Costa Rica, said, “The greatest benefit related to the digital world is that technology will allow people to have access to equal opportunities both in the world of work and in culture, allowing them to discover other places, travel, study, share and enjoy spending time in real-life experiences.”

Gus Hosein, executive director of Privacy International, commented, “Direct human connections will continue to grow over the next decade-plus, with more local community-building and not as many global or regional or national divisions. People will have more time and a more-sophisticated appreciation for the benefits and limits of technology. While increased electrification will result in ubiquity of digital technology, people will use it more seamlessly, not being “online” or ‘offline.’ Having been through a dark period of transition, a sensibility around human rights will emerge in places where human rights are currently protected and will find itself under greater protection in many more places, not necessarily under the umbrella term of ‘human rights.’”

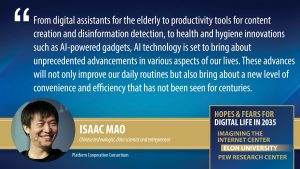

Isaac Mao, Chinese technologist, data scientist and entrepreneur, said, “Artificial Intelligence is poised to greatly improve human well-being by providing assistance in processing information and enhancing daily life.

Isaac Mao, Chinese technologist, data scientist and entrepreneur, said, “Artificial Intelligence is poised to greatly improve human well-being by providing assistance in processing information and enhancing daily life.

“From digital assistants for the elderly to productivity tools for content creation and disinformation detection, to health and hygiene innovations such as AI-powered gadgets, AI technology is set to bring about unprecedented advancements in various aspects of our lives.

“These advances will not only improve our daily routines but also bring about a new level of convenience and efficiency that has not been seen for centuries. With the help of AI, even the most mundane tasks such as brushing teeth or cutting hair can be done with little to no effort and concern, dramatically changing the way we have struggled for centuries.”

Michael Muller, a researcher for a top global technology company who is focused on human aspects of data science and ethics and values in applications of artificial intelligence, wrote, “We will learn new ways in which humans and AIs can collaborate. Humans will remain the center of the situation. That doesn’t mean that they will always be in control, but they will always control of when and how they delegate selected activities to one or more AIs.”

Terri Horton, work futurist at FuturePath, said, “Digital and immersive technologies and artificial intelligence will continue to exponentially transform human connections and knowledge across the domains of work, entertainment and social engagement. By 2035, the transition of talent acquisition, onboarding, learning and development, performance management and immersive remote work experiences into the metaverse – enabled by Web3 technologies – will be normalized and optimized.

“Work, as we know it, will be absolutely transformed. If crafted and executed ethically, responsibly and through a human-centered lens, transitioning work into the metaverse can be beneficial to workers by virtue of increased flexibility, creativity and inclusion. Additionally, by 2035, generative artificial intelligence (GAI) will be fully integrated across the employee experience to enhance and direct knowledge acquisition, decision-making, personalized learning, performance development, engagement and retention.”

Daniel Pimienta, leader of the Observatory of Linguistic and Cultural Diversity on the Internet, commented, “I hope to see the rise of the systematic organization of citizen education on digital literacy with a strong focus on information literacy. This should start in the earliest years and carry forward through life. I hope to see the prioritization of the ethics component (including bias evaluation) in the assessment of any digital system. I hope to see the emergence of innovative business models for digital systems that are NOT based on advertising revenue, and I hope that we will find a way to give credit to the real value of information.”

The remarks made by the respondents to this canvassing reflect their personal positions and are not the positions of their employers. The descriptions of their leadership roles help identify their background and the locus of their expertise. Some responses are lightly edited for style and readability.

In the next section, we highlight the remarks of experts who gave some of the most wide-ranging yet incisive responses to our request for them to discuss human agency in digital systems in 2035. Following that in Chapter 2, we offer a set of longer, broader essays written by leading expert participants. And that is followed with additional sections covering respondents’ comments organized under the sets of themes about harms and benefits set out in the tables above. And a final chapter covers some summary statements about ChatGPT and other trends in digital life.

1. A Sampling of Overarching Views on Digital Change By 2035

The following incisive and informative responses to our questions about the positive and negative impact of digital change by 2035 represent some of the big ideas shared by several of the hundreds of thought leaders who participated in this canvassing.

Working to meet the challenges raised by digital technologies will inspire humanity to grow and benefit as a species

Stephan Adelson, president of Adelson Consulting Services and an expert in the internet and public health, said, “The recent release of several AI tools in their various categories begins a significant shift in the creative and predictive spaces. Creative writing, predictive algorithms, image creation, computations, even the process and products of thought itself are being challenged. I predict that the greatest potential for benefit to mankind by 2035 from digital technologies will come through the challenges their existence creates. We, as a species, are creators of technologies that are learning and growing their productive capabilities and creative capacities. As these tools grow, learn and become integrated into our everyday lives, both personal and professional, they will become major competitors for resources, financial, social and as entertainment. I feel it is in this competition that they will provide our greatest growth and benefits as a species. As we compete with our digital creations we will be forced to grow or become dependent on what we have created and can no longer exceed.”

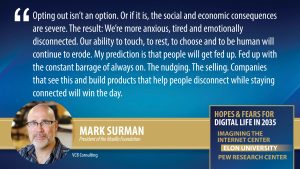

Our ability to touch, to rest, to choose and to be human will continue to erode; we are more anxious, tired and emotionally disconnected; we need new tech to get us off the tech

Mark Surman, president of the Mozilla Foundation, commented, “The most harmful thing I can think of isn’t a change as much as a trend: The ability for us to disconnect will increasingly disappear. We’re building more and more reasons to be always on and instantly responsive into our jobs, our social lives, our public spaces, our everything.

Mark Surman, president of the Mozilla Foundation, commented, “The most harmful thing I can think of isn’t a change as much as a trend: The ability for us to disconnect will increasingly disappear. We’re building more and more reasons to be always on and instantly responsive into our jobs, our social lives, our public spaces, our everything.

“The combination of immersive technologies and social pressure will make this worse. Opting out isn’t an option. Or, if it us, the social and economic consequences are severe. The result: we’re more anxious, tired and emotionally disconnected. Our ability to touch, to rest, to choose and to be human will continue to erode.

“My biggest prediction is that people will get fed up. Fed up with the constant barrage of always on. The nudging. The selling. The treadmill. Companies that see this coming – and that can build tech products that help people turn down the volume and disconnect while staying connected – will win the day. Clever, humane use of AI will be a key part of this.”

Once trust is lost, it is difficult to reclaim, and digital ‘reality’ is dangerously amenable to distortion and manipulation that can lead to the erosion of it at all levels of society

Larry Lannom, vice president at the Corporation for National Research Initiatives, observed, “In thinking about the potential harm that exponentially improved digital technologies could wreck by 2035 I find that I have two levels of concern. The first is the fairly obvious worry that advanced technologies could be used by malevolent actors, at the state, small-group or individual level, to cause damage beyond what they could achieve with today’s tools. AI-based autonomous weapons, new pathogens, torrents of misinformation precision-crafted to appeal to the recipients and total state-level intrusion into the private lives of the citizenry are just some of the worrying possibilities that are all too easy to imagine evolving by 2035.

“A more insidious worry, however, is the potential erosion of trust at all levels of society and government. More and more of our lives are affected by or even lived in the digital realm and as that environment increases in size and sophistication it seems likely that the impact will increase. But digital ‘reality’ is much more amenable to distortion and manipulation than even the worst human-level deception.

“The ability of advanced computing systems of all kinds to convincingly generate fake audio and video representations of any public figures, to generate overwhelming amounts of reasonable sounding mis-information, and to use detailed personal information, gathered legally or illegally, to craft precision messaging for manipulation beyond what can be done today could contribute to a complete lack of trust at all levels of society. Once trust is lost it is difficult to reclaim.”

AI-driven healthcare may include home air-quality and waste stream assessments, but the U.S. will lag behind other regions of the world while China will lead

Mark Schaefer, a business professor at Rutgers University and author of ‘Marketing Rebellion,’ wrote, “In America, healthcare progress will come from startups and boutique clinics that offer wealthy individuals environmental screening devices and pharmaceutical solutions customized for precise genetic optimization. The smart home of the future will analyze air quality, samples from the bathroom waste stream and food consumption to suggest daily health routines and make automatic environmental and pharmaceutical adjustments.

“Overall, an AI-driven healthcare system will be radically streamlined to be highly personal, effective and efficient in many developed regions of the world – excluding the United States. While the U.S. will remain the leader in developing new healthcare technology, the country will lag most of the world in this tech adoption due to powerful lobbyists in the healthcare industry and a dysfunctional government unable to legislate reform. However, progress will take off rapidly in China, a country with a rapidly-aging population and a government that will dictate speedy reform.

“Dramatic improvements will also occur in countries with socialized healthcare, since efficiency means a dramatic improvement in direct government spending. Expected lifespan will increase by 10% in these nations by 2035. China’s population will have declined dramatically by 2035, a symptom of the one-child policy, rapid urbanization and social changes. China will attract immigrant workers to boost its population by offering free AI-driven healthcare.”

Advancing federation and decentralization, mandating interoperability and an emphasis on subsidiarity in platform governance are key to the future

Cory Doctorow, activist journalist and author of “How to Destroy Surveillance Capitalism,” wrote, “I hope to see an increased understanding of the benefits of federation and decentralization; interoperability mandates, such as the Digital Markets Act, and a renewed emphasis on interoperability as a means of lowering switching costs and disciplining firms; a decoupling of decentralization from blockchain (which is nonsense); and an emphasis on subsidiarity in platform governance. Among the challenges are new compliance duties for intermediaries – new rules that increase surveillance and algorithmic filtering while creating barriers to entry for small players – and ‘link taxes’ and other pseudocopyrights that control who can take action to link to, quote and discuss the news.”

Equitable access to essential human services, to online opportunities, must be achieved

Cathy Cavanaugh, chief experience officer at the University of Florida Lastinger Center for Learning, said, “Inequitable access to technology and services exacerbates existing social and economic gaps. Too few governments balance capitalism and social services in ways that serve the greatest needs.

Cathy Cavanaugh, chief experience officer at the University of Florida Lastinger Center for Learning, said, “Inequitable access to technology and services exacerbates existing social and economic gaps. Too few governments balance capitalism and social services in ways that serve the greatest needs.

“These imbalances look likely to continue rather than to change because of increasing power imbalances in many countries. Equitable access to essential human services is crucial. Technology now exists in most locations that is affordable, available in most languages and for people of many physical abilities and easy to learn.

“The most beneficial use of this personal technology is to connect individuals, families and communities to necessary and life-changing services using secure technology that can streamline and automate these services, making them more accessible.

“We have seen numerous examples including microfinance, apps that help unhoused people find shelter, online education, telehealth and a range of government services. Too many people still experience poverty, bias and lack of access to serve their needs and create opportunities for them to fully participate in and contribute to their communities.”

The intelligence and effectiveness of AI systems operating alone will be overestimated, creating catastrophic failure points

Jon Lebkowsky, writer and co-wrangler of Plutopia News Network, previously CEO, founder and digital strategist at Polycot Associates, commented, “Relying too much on AI and failing to factor in human judgment could have potentially disastrous consequences. I’m not concerned that we’ll have a malignant omnipotent AI like ‘Skynet,’ but that the intelligence and effectiveness of AI systems operating alone will be overestimated, creating catastrophic failure points. AI also has the potential to be leveraged for surveillance and control systems by autocratic governments and organizations to the detriment of freedom and privacy. The misuse of technology is especially likely to the extent that those responsible for governance and regulation misunderstand relevant technologies.”

Lebkowsky offered three specific potential issue areas for the future:

- “If we fail to shift from fossil fuels to cleaner, more efficient technologies, we may fail to manage our response to climate change effectively and leverage innovations that could support adaptation and/or mitigation of global warming.

- “If we fail to address the monopolistic power of ‘big tech’ and social manipulation via centralized social media, we may see increasing uses of propaganda and online influence to gain power for its own sake, potentially evolving dystopian autocracies and losing the democratic and egalitarian intentions that are so challenging to sustain.

- “Medical and scientific ignorance and suspicion, as we see in the current anti-vaccine movement, could offset medical advances. We must restore trust in scientific and medical expertise through education, and through ensuring that scientific and medical communities adhere to standards that will make them inherently trustworthy.”

Digital life offers opportunities to enhance longevity, health, access to resources, more

Micah Altman, social and information scientist at the Center for Research in Equitable and Open Scholarship at MIT, said, “Whether digital or analog, there are five dimensions to individual well-being: longevity, health, access to resources, subjective well-being and agency over making meaningful life choices. Within the last decade the increasing digitalization of human activities has contributed substantially in each of these areas, providing benefits in four of the five areas.

“Digital life is greatly expanding access to online education (especially through open online courses and increasingly through online degree and certification programs); health information and health treatment (especially through telehealth in the area of behavioral wellness); the opportunity to work from remote locations (which is particularly beneficial for people with disabilities); and the ability to engage with government through online services, access to records, and modes of online participate in (e.g., through online public hearings). Expansion in most of these areas is likely to continue over the next dozen years.”

It is likely that a highly visible abuse or scandal with clearly identifiable victims will be needed to galvanize the public against digital excesses

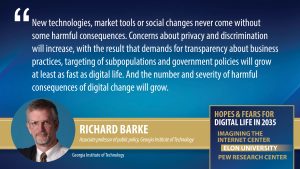

Richard Barke, associate professor of public policy at Georgia Institute of Technology, responded, “The shift from real to digital life probably will not decelerate. The use of digital technologies for shopping, medical diagnosis and interpersonal relations will continue. The use of data analytics by businesses and governments also will continue to grow. And the number and severity of harmful consequences of these changes will also grow. New technologies, market tools or social changes never come without some harmful consequences. Concerns about privacy and discrimination will increase, with the result that demands for transparency about business practices, targeting of subpopulations and government policies will grow at least as fast as digital life.

Richard Barke, associate professor of public policy at Georgia Institute of Technology, responded, “The shift from real to digital life probably will not decelerate. The use of digital technologies for shopping, medical diagnosis and interpersonal relations will continue. The use of data analytics by businesses and governments also will continue to grow. And the number and severity of harmful consequences of these changes will also grow. New technologies, market tools or social changes never come without some harmful consequences. Concerns about privacy and discrimination will increase, with the result that demands for transparency about business practices, targeting of subpopulations and government policies will grow at least as fast as digital life.

“Those demands are not likely to be answered in the absence of significant harmful or menacing events that catch the attention of the public, the media and eventually policymakers. The environmental movement needed a Rachel Carson and a Love Canal in the 1960s and 1970s as policy entrepreneurs and focusing events. The same is true for many other significant changes in business and government decision-making.

“Unfortunately, it is likely that by 2035 some highly visible abuse or scandal with clearly identifiable victims and culprits will be needed to provide an inflection point that puts an aggrieved public in the streets and on social media, in courtrooms and in legislative hallways, resulting in a new regime of law and regulation to constrain the worst excesses of the digital world. But, even then, is it likely – or even possible – that the speed of reforms will be able to keep up with the speed of technological and business innovations?”

‘We are rewriting childhood for youngsters ages 0 to 5, and it is not in healthy ways’

Jane Gould, founder of DearSmartphone, responded, “We have been rewriting the concept of screen time and exposure. This trend began in the 2000s but the introduction of mobility and iPhones and mobile apps in 2007 accelerated the change. We are rewriting childhood for youngsters ages 0 to 5, and it is not in healthy ways. All infants must go through discrete stages of cognitive and physical growth. There is nothing that we can do to speed these up, nor should we. Yet from their earliest moments we put young babies in front of digital devices and use them to entertain, educate and babysit them.

“These devices use artifices like bright lights and colors to hold their attention, but they do not educate them in the way that thoughtful, watchful parents can. More than anything else, these electronics keep children from playing with the traditional hand-held toys and games that use all five senses to keep babies busy and engaged, with play and in two-way exchanges. Meanwhile, parents are distracted and pay less attention to their infants because they stay engaged with their own personal phones and touchscreens.”

Digital access will grow and programs will become more user-friendly

Henning Schulzrinne, Internet Hall of Fame member, Columbia University professor of computer science and co-chair of the Internet Technical Committee of the IEEE, predicted, “Amplified by machine learning and APIs, low-code and no-code systems will make it easier for small businesses and governments to develop user-facing systems to increase productivity and ease the transition to e-government. Government programs and consumer demand will make high-speed (100 Mb/s and higher) home access, mostly fiber, near-universal in the United States and large parts of Europe, including rural areas, supplemented by low-Earth orbiting satellites for covering the most remote areas.

“We will finally move beyond passwords as the most common means of consumer authentication, making systems easier to use and eliminating many security vulnerabilities that endanger systems today.

“On the ‘worst’ side of change, the concentration of ad revenue and the lack of a viable alternative source of income will further diminish the reach and capabilities of local news media in many countries, degrading the information ecosystem. This will increase polarization, facilitate government corruption and reduce citizen engagement.”

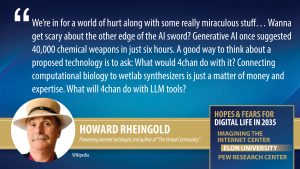

‘Wanna get scary about the other edge of the AI sword? What will 4chan do with it?’

Howard Rheingold, pioneering internet sociologist and author of “The Virtual Community,” wrote, “If we are honestly looking back at the last decades of rapid technological change for hints about decades to come, we’re in for a world of hurt along with some really miraculous stuff. I sense that we are at an inflection point in the conduct of science as significant as the introduction of computers: the use of machine learning techniques as scientific thinking and knowledge tools. Proteins, for just one example, are topologically complex and can fold into a large number of possible shapes. Much of immune system and anti-cancer therapies rely on matching the shape of proteins on the surface of a cell. Now, AI can propose previously unknown proteins of medical significance.

Howard Rheingold, pioneering internet sociologist and author of “The Virtual Community,” wrote, “If we are honestly looking back at the last decades of rapid technological change for hints about decades to come, we’re in for a world of hurt along with some really miraculous stuff. I sense that we are at an inflection point in the conduct of science as significant as the introduction of computers: the use of machine learning techniques as scientific thinking and knowledge tools. Proteins, for just one example, are topologically complex and can fold into a large number of possible shapes. Much of immune system and anti-cancer therapies rely on matching the shape of proteins on the surface of a cell. Now, AI can propose previously unknown proteins of medical significance.

“Machine Learning (oversimplified) uses iterative computations modeled on the way neurons work. It can be applied to datasets other than the omniversal ones sought by large learning models, LLMs. LLMs don’t ‘know,’ but the way significant knowledge can be parsed out of it is, in my opinion, impressive, although the technology is in its infancy. Yes, it swallows all the bull along with the good info, and yes, it is unreliable and makes stuff up, and no, the models are tools, they are not General Intelligence. They don’t understand. They do statistics. Think of them as thinking-knowledge tools.

“As mathematics and computers come to enable human minds to go places they were previously unable to explore I see a lot of change coming from this symbiosis of machine learning and human production of words, images, sounds and code. Computational biology is a good example of this two-edged miracle.

“Wanna get scary about the other edge of the AI sword? Generative AI once suggested 40,000 chemical weapons in just six hours. I recall that Bill Joy wrote a Wired magazine essay (23 years ago!) titled ‘Why the Future Doesn’t Need Us.’ In that essay he mentioned affordable desktop wetlabs, capable of creating malicious organisms. A good way to think about a proposed technology is to ask: What would 4chan do with it? Connecting computational biology to wetlab synthesizers is just a matter of money and expertise. What will 4chan do with LLM tools?”

From lentil soup recipes, pizza and Bollywood music to scan-reading, customer service and newswriting, tech has upended how we give and get information, for good and bad

Alan D. Mutter, consultant and former Silicon Valley CEO, said, “The magic of technology enables me to Google lentil soup recipes, trade stocks in the park, stream Bollywood music and Zoom with friends in Germany. Without question, tech has solved the eternally vexing P2P problem – the rapid, friction-free delivery of hot-ish pizza to pepperoni-craving persons. Techno thingies like software calibration and hardware calibration networks will get faster and somewhat better (albeit more complex) but probably not cheaper. Here’s what I mean: For no additional charge, the latest Apple Watches will call 911 if they think you fell. It’s a good idea and the feature actually has saved some lives. But it also is producing an overwhelming number of false alarms. So, it is a good thing that sometimes is a bad thing.”

Mutter offered these thoughts on future AI applications:

- “AI probably will do a better job of reading routine scans than radiologists and might do a better job than human air traffic controllers who sometimes vector two planes to the same runway.

- “AI undoubtedly will answer all phones everywhere, cutting costs but also further compromising the quality of customer service at medical offices, insurance companies, tech-support lines and all the rest.

- “AI will produce all forms of media content but likely without the elan and judgment formerly contributed by humans.

- “AI probably will be more accurate than humans at doing math but less savvy at sorting fact from fiction and nuance from nuisance.

“Technology has upended forever the ways we get and give information. We now live in a Tower of Babel where yadda-yadda moves unchecked, unmoderated and unhinged at the speed of light, polluting and corrupting the public discourse. This is perilous for a democracy like the United States. I am afraid for our republic.”

‘Societies may become entirely paralyzed, caught between an inability to rely on facts for basic cooperation, or trapped between warring factions, or both’

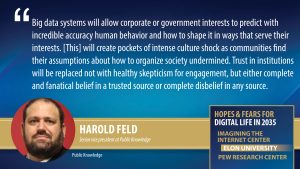

Harold Feld, senior vice president at Public Knowledge, predicted, “Reliable, affordable high-speed broadband will become as ubiquitous in the world (including the developing world) as telephone service was in the United States in the late 20th Century. The actual technology will vary greatly depending on country, and we will still see speed differences and other quality of service differences that will maintain a digital divide. But the combination of available communications technology and solar operated systems will enable a wide range of benefits. These will include:

Harold Feld, senior vice president at Public Knowledge, predicted, “Reliable, affordable high-speed broadband will become as ubiquitous in the world (including the developing world) as telephone service was in the United States in the late 20th Century. The actual technology will vary greatly depending on country, and we will still see speed differences and other quality of service differences that will maintain a digital divide. But the combination of available communications technology and solar operated systems will enable a wide range of benefits. These will include:

- “Far more efficient resource tracking and allocation and far more efficient environmental monitoring will enable dramatic increases in food and clean water distribution where needed and will help to predict potential environmental disasters with greater accuracy and certainty.

- “Greater communication potential will enable vast improvements in distance learning and telemedicine. In countries where health professionals are scarce, or where travel is difficult, a wealth of diagnostic tools and a broadband connection will allow a handful of trained first responders to treat people locally under the guidance of experienced and more highly trained medical professionals. Necessary resources such as antibiotics will be delivered by drones, and local personnel guided in how to administer and provide follow up care. As a last resort, doctors can order medical evacuations.

- “Children will have access to education in their native language. Artificial expenses such as uniforms will be eliminated as a requirement. Girls will be able to access equal education without fear of assault.

“Yet, here’s the thing, widespread ubiquitous broadband could easily broaden ubiquitous surveillance for corporate reasons and to aid repressive governments. Big data systems will be able to sort the noise from the signal and allow corporate or government interests to predict with incredible accuracy human behavior and how to shape it in ways that best serve their interests. Widespread access to others will create pockets of intense culture shock as communities find their basic assumptions about how to organize society undermined.

“Basic trust in institutions will be replaced not with healthy skepticism for engagement, but either complete and fanatical belief in a trusted source or complete disbelief in any source. To slightly paraphrase William Butler Yeats, ‘Mere anarchy is loosed upon the world … The ceremony of innocence is drowned. The best will lack all conviction, while the worst will be filled with passionate intensity.’ Societies may become entirely paralyzed, caught between an inability to rely on facts for basic cooperation, or trapped between warring factions, or both.

“Copyright and technology to manage microtransactions will create huge gaps in knowledge between the haves and have nots, as even basic educational material becomes subject to limitations on sharing and requirements for access fees. Ownership of books or other educational media will become a thing of the past, as every digital source of knowledge will be licensed rather than owned. Book printing will wither away, so that modern educational materials will be inaccessible to those who cannot afford them.

“For the same reason, innovation will slow and become the province of a privileged few able to negotiate access to the needed software tools. Even basic mechanical inventions will have digital locks and software to prevent any tinkering.”

2. Expert Essays on the Expected Impact of Digital Change By 2035

Most respondents to this canvassing wrote brief reactions to this research question. However, a number of them wrote multilayered responses in a longer essay format. This essay section of the report is quite lengthy, so first we offer a sampler of a some of these essayists’ comments.

- Liza Loop observed, “Humans evolved both physically and psychologically as prey animals eking out a living from an inadequate supply of resources. … The biggest threat here is that humans will not be able to overcome their fear and permit their fellows to enjoy the benefits of abundance brought about by automation and AI.”

- Richard Wood predicted, “Knowledge systems with algorithms and governance processes that empower people will be capable of curating sophisticated versions of knowledge, insight and something like ‘wisdom’ and subjecting such knowledge to democratic critique and discussion, i.e., a true ‘democratic public arena’ that is digitally mediated.”

- Matthew Bailey said he expects that, “AI will assist in the identification and creation of new systems that restore a flourishing relationship with our planet as part of a new well-being paradigm for humanity to thrive”

- Judith Donath warned, “The accelerating ability to influence our beliefs and behavior is likely to be used to exploit us; to stoke a gnawing dissatisfaction assuageable only with vast doses of retail therapy; to create rifts and divisions and a heightened anxiety calculated to send voters to the perceived safety of domineering authoritarians.”

- Kunle Olorundare said, “Human knowledge and its verifying, updating, safe archiving by open-source AI will make research easier. Human ingenuity will still be needed to add value – we will work on the creative angles while secondary research is being conducted by AI. This will increase contributions to the body of knowledge and society will be better off.”

- Jamais Cascio said, “It’s somewhat difficult to catalog the emerging dystopia because nearly anything I describe will sound like a more-extreme version of the present or an unfunny parody. … Simulated versions of you and your mind are very likely on their way, going well beyond existing advertising profiles.”

- Lauren Wilcox explained, “Interaction risks of generative AI include the ability for an AI system to impersonate people in order to compromise security, to emotionally manipulate users and to gain access to sensitive information. People might also attribute more intelligence to these systems than is due, risking overtrust and reliance on them.”

- Catriona Wallace looked ahead to in-body tech: “Embeddable software and hardware will allow humans to add tech to their bodies to help them overcome problems. There will be AI-driven, 3D-printed, fully-customised prosthetics. Brain extensions – brain chips that serve as digital interfaces – could become more common. Nanotechnologies may be ingested.”

- Stephen Downes predicted, “Cash transactions will decline to the point that they’re viewed with suspicion. Automated surveillance will track our every move online and offline, with AI recognizing us through our physical characteristics, habits and patterns of behaviour. Total surveillance allows an often-unjust differentiation of treatment of individuals.”

- Giacomo Mazzone warned, “With relatively small investments, democratic processes could be hijacked and transformed into what we call ‘democratures’ in Europe, a contraction of the two French words for ‘democracy’ and ‘dictatorship.’ AI and a distorted use of technologies could bring mass-control of societies.”

- Christine Boese warned, “Soon all high-touch interactions will be non-human. NLP communications will seamlessly migrate into all communications streams. They won’t just be deep fakes, they will be ordinary and mundane fakes, chatbots, support technicians, call center respondents and corporate digital workforces … I see harm in ubiquity.”

- Jonathan Grudin spoke of automation: “I foresee a loss of human control in the future. The menace isn’t control by a malevolent AI. It is a Sorcerer’s Apprentice’s army of feverishly acting brooms with no sorcerer around to stop them. Digital technology enables us to act on a scale and speed that outpaces human ability to assess and correct course. We see it already.”

- Michael Dyer noted we may not want to grant rights to AI: “AI researchers are beginning to narrow in on how to create entities with consciousness; will humans want to give civil rights and moral status to synthetic entities who are not biologically alive? If humans give survival goals to synthetic agents, then those entities will compete with humans for survival.”

- Avi Bar-Zeev preached empowerment over exploitation: “The key difference between the most positive and negative uses of XR, AI and the metaverse is whether the systems are designed to help and empower people or to exploit them. Each of these technologies sees its worst outcome quickly if it is built to benefit companies that monetize their customers.”

- Beth Noveck predicted that AI could help make governance more equitable and effective and raise the quality of decision-making, but only if it is developed and used in a responsible and ethical manner, and “if its potential to be used to bolster authoritarianism is addressed proactively.”

- Charalambos Tsekeris said, “Digital technology systems are likely to continue to function in shortsighted and unethical ways, forcing humanity to face unsustainable inequalities and an overconcentration of technoeconomic power. These new digital inequalities could amount to serious, alarming threats and existential risks for human civilization.”

- Alejandro Pisanty wrote, “Human connection and human rights are threatened by the scale, speed and lack of friction in actions such as bullying, disinformation and harassment. The invasion of private life available to governments facilitates repression of the individual, while the speed of Internet expansion makes it easy to identify and attack dissidents.”